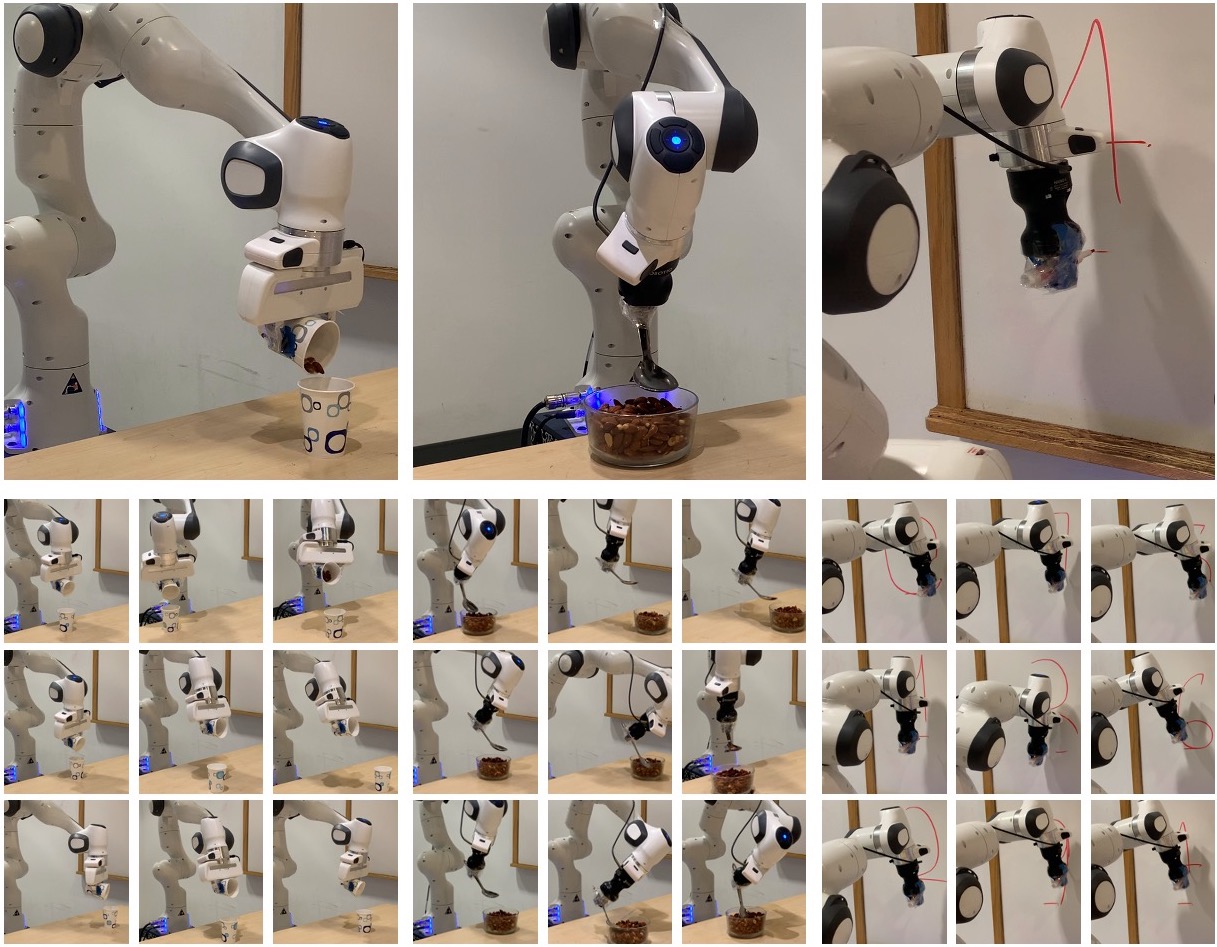

We tackle the problem of generalization to unseen configurations for dynamic tasks in the real world while learning from high-dimensional image input. The family of nonlinear dynamical system-based methods have successfully demonstrated dynamic robot behaviors but have difficulty in generalizing to unseen configurations as well as learning from image inputs. Recent works approach this issue by using deep network policies and reparameterize actions to embed the structure of dynamical systems but still struggle in domains with diverse configurations of image goals, and hence, find it difficult to generalize. We address this dichotomy by leveraging embedding the structure of dynamical systems in a hierarchical deep policy learning framework, called Hierarchical Neural Dynamical Policies (H-NDPs). Instead of fitting deep dynamical systems to diverse data directly, H-NDPs form a curriculum by learning local dynamical system-based policies on small regions in state-space and then distill them into a global dynamical system-based policy that operates only from high-dimensional images. We perform extensive experiments on dynamic tasks both in the real world and simulation.

Hierarchical Neural Dynamic Policies

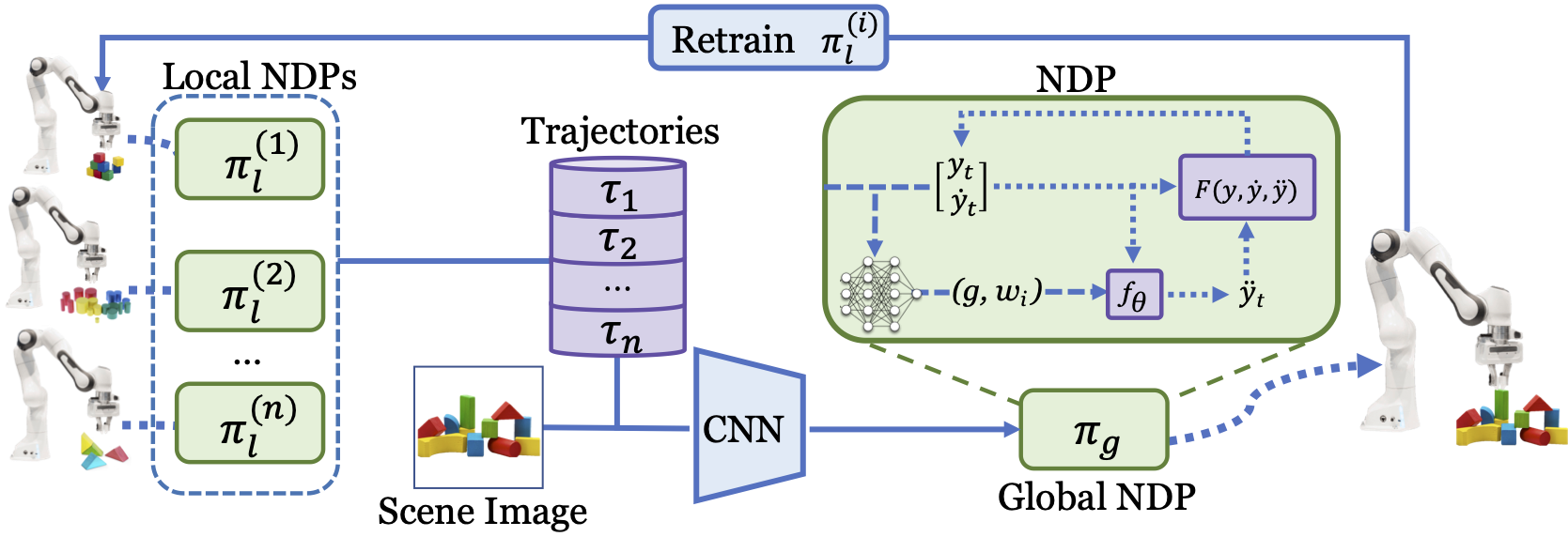

We train local Neural Dynamic Policies (NDPs) on each region of the task space, from state observations. A global NDP, (usually taking in image input of the scene) learns to imitate the local experts. We use the global NDP to retrain local NDPs which keeps the NDPs from diverging. These local-to-gloabl interactions happen in an iterative manner. NDPs make a good candidate for capturing such local-to-global interactions due to their shared structure and the fact that they operate over a smooth trajectory space.

Source Code

Coming soon!

Paper and Bibtex

|

Citation |

|

@inproceedings{bahl2021hierarchical,

title={Hierarchical Neural Dynamic Policies},

author={Bahl, Shikhar and Gupta, Abhinav

and Pathak, Deepak},

year={2021},

booktitle={RSS}

}

|